Table of Content

Strategic 360 Reviews: Using Holistic Feedback to Fuel the IDP (Learning Loop)

Most 360-degree feedback programs end exactly where they should begin.

In the typical mid-market company, a 360 review follows a predictable and disappointing path. HR launches the survey. Peers write anonymous comments. The manager hands the employee a 40-page PDF report filled with charts and spider graphs. The employee reads it, feels overwhelmed or defensive, and files it away in a digital drawer never to be seen again.

This is a Data Graveyard.

The purpose of feedback is not to report on the past. It is to fuel the future. If the data does not lead to behavior change, the time spent collecting it was wasted.

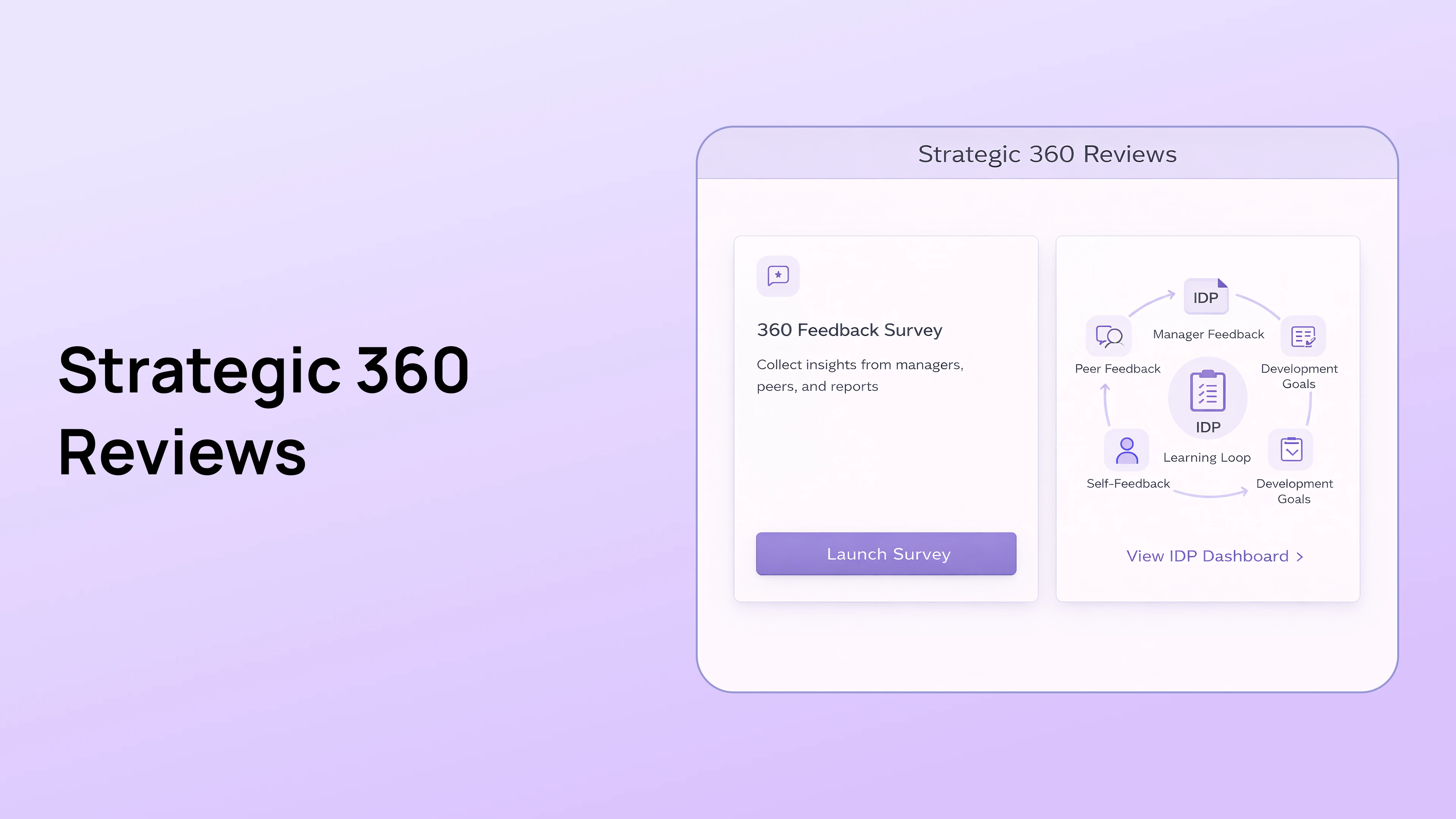

In the PerformSpark Strategy, we view the 360 Review as the fuel for the Individual Development Plan (IDP). This connection creates a "Learning Loop" where feedback automatically triggers training recommendations. This guide explains how to move your 360 process from a passive report to an active growth engine.

What is the difference between a Developmental 360 and an Evaluative 360?

A Developmental 360 is strictly for growth and learning, whereas an Evaluative 360 is used to measure performance for compensation. You must clearly distinguish between these two to build trust.

The Trust Gap

If employees believe their peer feedback will determine their colleague's bonus, they will game the system. They will trade good reviews with their friends to ensure everyone gets a raise. We call this the scratch my back effect. Conversely, if they dislike a colleague, they might weaponize the survey to hurt their financial standing.

The Strategic Choice

For the Learning Loop we are discussing today, you must use Developmental 360s. You need raw, honest data about blind spots. This level of candor only happens when the stakes of pay are removed from the equation. The goal is to say Here is where you can grow rather than Here is why you are not getting a raise.

Why do most 360 degree feedback programs fail?

Most programs fail because they identify the Diagnosis (weaknesses) but fail to provide the Prescription (training). This is known as the Next Step Gap.

What is the "So What" problem?

The "So What" problem occurs when feedback provides data without direction. Imagine telling a Sales Manager that their peers scored them a 2 out of 5 on Active Listening. Without a next step, the manager's reaction is almost always defensiveness. They will argue that the data is wrong or that their peers do not understand their role. The feedback loop breaks because there is no mechanism to turn that data point into action.

How does Analysis Paralysis affect learning?

Legacy 360 reports are often too dense for the average employee to digest. They provide scores on 20 different competencies ranging from Communication to Strategic Thinking. The employee looks at the report and does not know where to start. Psychology tells us that if you

give someone 20 priorities, they have zero priorities. A successful program must force focus on just one or two key areas.

How does the Golden Thread connect 360s to IDPs?

The Golden Thread is the digital architecture that automatically pipes feedback data directly into the Individual Development Plan (IDP) to trigger specific training recommendations.

In PerformSpark, the feedback does not sit in a silo. It flows through the system to create actionable learning via a structured workflow.

- The Signal: The 360 Review identifies a specific gap. For example, peer feedback flags Difficulty running effective meetings.

- The Connection: The system highlights this competency gap and prompts the user with a specific notification. It asks if they want to add this growth area to their plan.

- The Action: With one click, the employee adds the goal to their IDP Module.

- The Content: The IDP automatically suggests relevant content. It might recommend a specific course or an internal mentorship session with a senior leader who excels at that skill.

This transforms the 40-page PDF report into a single, actionable To-Do list.

What is the Johari Window in the context of the 360s?

The Johari Window is a psychological framework used to visualize Blind Spots, which are behaviors that others see in you but you do not see in yourself.

The Four Quadrants

To get buy-in for 360s, you must explain that the 360 is the only tool designed to unlock the Blind Spot quadrant.

- The Open Area: These are things you know and others know, such as your technical coding skills. You do not need a 360 for this as it is public knowledge.

- The Hidden Area: These are things you know but hide from others, such as a fear of public speaking. You do not need a 360 for this as it is private.

- The Blind Spot: These are things others see but you miss, such as interrupting people in meetings. This is the sole purpose of the 360.

Framing the Launch

When you launch your 360 program, frame it to your teams using the Johari Window concept. Tell them clearly that the goal is not to fish for compliments. The goal is to hunt for Blind Spots so they can be addressed.

How does TrAI analyze peer feedback for bias?

TrAI, our ethical intelligence engine, acts as a real-time filter that scans open-ended comments for non-constructive language, personality critiques, or bias before the report is finalized.

One of the biggest fears employees have regarding 360s is receiving toxic or biased comments. TrAI solves this by enforcing feedback hygiene.

Personality vs. Behavior

Feedback should always be about what you do, not who you are. If a reviewer writes He is lazy, TrAI flags it immediately. It prompts the reviewer to rewrite the comment to focus on specific observed behaviors, such as missing deadlines or arriving late. This turns an insult into a coaching opportunity.

Gender Bias Detection

Research consistently shows that women often receive vague feedback about communication style while men receive specific feedback about business outcomes. If TrAI detects patterns of gendered language, such as describing women as emotional versus men as passionate, it alerts the HR Admin to review the dataset before release.

How to launch a feedback cycle that drives learning?

A successful feedback cycle follows the SCAP method: Select, Collect, Analyze, and Plan.

Step 1: Select (The Raters)

Use data to select raters, not just friendship. Do not let employees pick only their friends as raters. This creates a Cheerleader Effect where everyone gets 5 stars. Use the Check-ins Data to see who they actually work with. The system should suggest raters based on collaboration history to ensure the feedback is relevant.

Step 2: Collect (The Nudge)

Use the Nudge Engine to automate follow-ups. Short surveys get 90% completion rates, while long surveys often drop below 30%. Focus on core competencies that matter to your culture. The Nudge Engine sends automated reminders via Slack or Teams to ensure peers complete the reviews on time.

Step 3: Analyze (The TrAI Summary)

Use AI to summarize themes. Do not make employees read raw data rows. TrAI summarizes the themes automatically. It might say Your top strength is Technical Strategy, but your top blind spot is Cross-Functional Communication. This helps the employee digest the feedback without getting stuck on a single negative comment.

Step 4: Plan (The IDP)

Use the Feedback Debrief to set goals. The cycle is not complete until the IDP is updated. Managers must hold a debrief meeting where the agenda is strictly forward-looking. The only question that matters is: Which one skill from this report are we going to add to your development plan?

Conclusion

A 360 Review without an IDP is just noise. It creates anxiety without offering a solution.

When you connect the two, when you use Strategic 360 Reviews to populate the Learning Plan, you stop just measuring performance and start building it. You turn the Data Graveyard into a Launchpad. You empower your employees to take ownership of their own growth, supported by data rather than opinion.

The next step in your journey is to formalize that growth plan.

Book a Consultative Demo and see how PerformSpark turns Feedback into Learning.

Strategic 360 Reviews: Using Holistic Feedback to Fuel the IDP (Learning Loop)

Most 360-degree feedback programs end exactly where they should begin.

In the typical mid-market company, a 360 review follows a predictable and disappointing path. HR launches the survey. Peers write anonymous comments. The manager hands the employee a 40-page PDF report filled with charts and spider graphs. The employee reads it, feels overwhelmed or defensive, and files it away in a digital drawer never to be seen again.

This is a Data Graveyard.

The purpose of feedback is not to report on the past. It is to fuel the future. If the data does not lead to behavior change, the time spent collecting it was wasted.

In the PerformSpark Strategy, we view the 360 Review as the fuel for the Individual Development Plan (IDP). This connection creates a "Learning Loop" where feedback automatically triggers training recommendations. This guide explains how to move your 360 process from a passive report to an active growth engine.

What is the difference between a Developmental 360 and an Evaluative 360?

A Developmental 360 is strictly for growth and learning, whereas an Evaluative 360 is used to measure performance for compensation. You must clearly distinguish between these two to build trust.

The Trust Gap

If employees believe their peer feedback will determine their colleague's bonus, they will game the system. They will trade good reviews with their friends to ensure everyone gets a raise. We call this the scratch my back effect. Conversely, if they dislike a colleague, they might weaponize the survey to hurt their financial standing.

The Strategic Choice

For the Learning Loop we are discussing today, you must use Developmental 360s. You need raw, honest data about blind spots. This level of candor only happens when the stakes of pay are removed from the equation. The goal is to say Here is where you can grow rather than Here is why you are not getting a raise.

Why do most 360 degree feedback programs fail?

Most programs fail because they identify the Diagnosis (weaknesses) but fail to provide the Prescription (training). This is known as the Next Step Gap.

What is the "So What" problem?

The "So What" problem occurs when feedback provides data without direction. Imagine telling a Sales Manager that their peers scored them a 2 out of 5 on Active Listening. Without a next step, the manager's reaction is almost always defensiveness. They will argue that the data is wrong or that their peers do not understand their role. The feedback loop breaks because there is no mechanism to turn that data point into action.

How does Analysis Paralysis affect learning?

Legacy 360 reports are often too dense for the average employee to digest. They provide scores on 20 different competencies ranging from Communication to Strategic Thinking. The employee looks at the report and does not know where to start. Psychology tells us that if you

give someone 20 priorities, they have zero priorities. A successful program must force focus on just one or two key areas.

How does the Golden Thread connect 360s to IDPs?

The Golden Thread is the digital architecture that automatically pipes feedback data directly into the Individual Development Plan (IDP) to trigger specific training recommendations.

In PerformSpark, the feedback does not sit in a silo. It flows through the system to create actionable learning via a structured workflow.

- The Signal: The 360 Review identifies a specific gap. For example, peer feedback flags Difficulty running effective meetings.

- The Connection: The system highlights this competency gap and prompts the user with a specific notification. It asks if they want to add this growth area to their plan.

- The Action: With one click, the employee adds the goal to their IDP Module.

- The Content: The IDP automatically suggests relevant content. It might recommend a specific course or an internal mentorship session with a senior leader who excels at that skill.

This transforms the 40-page PDF report into a single, actionable To-Do list.

What is the Johari Window in the context of the 360s?

The Johari Window is a psychological framework used to visualize Blind Spots, which are behaviors that others see in you but you do not see in yourself.

The Four Quadrants

To get buy-in for 360s, you must explain that the 360 is the only tool designed to unlock the Blind Spot quadrant.

- The Open Area: These are things you know and others know, such as your technical coding skills. You do not need a 360 for this as it is public knowledge.

- The Hidden Area: These are things you know but hide from others, such as a fear of public speaking. You do not need a 360 for this as it is private.

- The Blind Spot: These are things others see but you miss, such as interrupting people in meetings. This is the sole purpose of the 360.

Framing the Launch

When you launch your 360 program, frame it to your teams using the Johari Window concept. Tell them clearly that the goal is not to fish for compliments. The goal is to hunt for Blind Spots so they can be addressed.

How does TrAI analyze peer feedback for bias?

TrAI, our ethical intelligence engine, acts as a real-time filter that scans open-ended comments for non-constructive language, personality critiques, or bias before the report is finalized.

One of the biggest fears employees have regarding 360s is receiving toxic or biased comments. TrAI solves this by enforcing feedback hygiene.

Personality vs. Behavior

Feedback should always be about what you do, not who you are. If a reviewer writes He is lazy, TrAI flags it immediately. It prompts the reviewer to rewrite the comment to focus on specific observed behaviors, such as missing deadlines or arriving late. This turns an insult into a coaching opportunity.

Gender Bias Detection

Research consistently shows that women often receive vague feedback about communication style while men receive specific feedback about business outcomes. If TrAI detects patterns of gendered language, such as describing women as emotional versus men as passionate, it alerts the HR Admin to review the dataset before release.

How to launch a feedback cycle that drives learning?

A successful feedback cycle follows the SCAP method: Select, Collect, Analyze, and Plan.

Step 1: Select (The Raters)

Use data to select raters, not just friendship. Do not let employees pick only their friends as raters. This creates a Cheerleader Effect where everyone gets 5 stars. Use the Check-ins Data to see who they actually work with. The system should suggest raters based on collaboration history to ensure the feedback is relevant.

Step 2: Collect (The Nudge)

Use the Nudge Engine to automate follow-ups. Short surveys get 90% completion rates, while long surveys often drop below 30%. Focus on core competencies that matter to your culture. The Nudge Engine sends automated reminders via Slack or Teams to ensure peers complete the reviews on time.

Step 3: Analyze (The TrAI Summary)

Use AI to summarize themes. Do not make employees read raw data rows. TrAI summarizes the themes automatically. It might say Your top strength is Technical Strategy, but your top blind spot is Cross-Functional Communication. This helps the employee digest the feedback without getting stuck on a single negative comment.

Step 4: Plan (The IDP)

Use the Feedback Debrief to set goals. The cycle is not complete until the IDP is updated. Managers must hold a debrief meeting where the agenda is strictly forward-looking. The only question that matters is: Which one skill from this report are we going to add to your development plan?

Conclusion

A 360 Review without an IDP is just noise. It creates anxiety without offering a solution.

When you connect the two, when you use Strategic 360 Reviews to populate the Learning Plan, you stop just measuring performance and start building it. You turn the Data Graveyard into a Launchpad. You empower your employees to take ownership of their own growth, supported by data rather than opinion.

The next step in your journey is to formalize that growth plan.

Book a Consultative Demo and see how PerformSpark turns Feedback into Learning.

Frequently Asked Questions

How many raters should be included in a 360 review?

We recommend between 3 and 5 raters. This provides enough data to triangulate trends (if 3 people say it, it is likely true) without overwhelming the employee with too much information. A mix of Peers, Direct Reports, and Cross-Functional partners is ideal to get a holistic view.

Should 360 feedback be anonymous?

Yes, for Developmental 360s, anonymity is crucial to get honest data about blind spots. If feedback is attributed, peers will soften their language to avoid conflict. However, the feedback must be filtered by tools like TrAI to prevent toxic anonymity and ensure comments remain constructive.

Can 360 feedback be used for performance appraisals?

We strongly advise against it. If you use peer feedback for pay (Evaluative), employees will game the system by trading positive reviews. Keep 360s focused on development and use Manager Reviews and Goal Data for compensation decisions.

How often should we run 360 reviews?

Run them annually or bi-annually. Unlike Check-ins which happen weekly, broad peer feedback takes time to collect and digest. Running them too often leads to survey fatigue and reduces the quality of the responses.

What is the biggest mistake managers make with 360s?

The biggest mistake is the Dump and Run, where the manager hands over the report and never discusses it. The manager's role is to act as a filter and coach, helping the employee interpret the data and choose one focus area for their IDP. Without this coaching conversation, the data is rarely acted upon.