Table of Content

Performance Management for Remote Teams: What Works in 2026

Managing performance in a remote or hybrid team is fundamentally different from managing performance in an office, not because the goals of performance management change, but because the informal infrastructure that supports it disappears.

In an office, a manager observes how employees show up, notices when someone seems disengaged, has spontaneous hallway conversations that surface problems early, and gives real-time feedback close to the event. Remote and distributed teams lose all of this. What replaces it must be explicit, documented, and consistent, built into the rhythm of the team rather than left to chance.

This page covers the specific adjustments that make performance management work across distributed and hybrid teams, the common failure modes, and how to build a system that maintains accountability and fairness regardless of where each team member is located.

Why Does Performance Management Change for Remote Teams?

In-person management relies heavily on proximity as a feedback signal. Managers see who is at their desk, notice behavioral changes, and have informal check-ins that happen naturally in shared spaces. Remote management has none of this. Every performance signal must be deliberately created and captured.

This shift has three specific implications:

- Recency bias is amplified. Without regular documented check-ins, managers in remote teams write performance reviews based on what they can remember, which is almost always the last few weeks. Structured 1-on-1 documentation is the primary defense against this.

- Visibility gaps favor certain working styles. Employees who communicate frequently in Slack or on video tend to be perceived as higher performers regardless of their actual output. Outcome-based performance criteria level this playing field and make ratings more defensible.

- Calibration inconsistency compounds. Remote managers often have less peer visibility than in-office managers. Without calibration sessions, rating standards diverge more quickly across distributed teams than across co-located ones.

What Are the Biggest Remote Performance Management Challenges?

Maintaining consistent feedback loops

In-office feedback happens informally and continuously. Remote feedback must be scheduled and structured. Without a recurring 1-on-1 cadence, feedback gaps of weeks or months develop, and employees in those gaps lose clarity on their performance and development.

Solution: Build a biweekly 1-on-1 template with consistent talking points: goal progress, blockers, development, and priorities for the next period. Use a performance management platform that logs these conversations so the record exists when review time arrives.

Managing performance across time zones

Asynchronous teams cannot rely on real-time feedback alone. When a manager and employee are eight hours apart, the feedback loop needs to function asynchronously, written feedback in the platform, goal updates in a shared system, and check-in notes that do not require simultaneous availability.

Solution: Establish clear asynchronous communication protocols: preferred channels, expected response times, and what constitutes a formal check-in record versus a casual message. Document performance conversations in the system, not in chat tools that do not preserve a searchable record.

Measuring output rather than presence

Remote managers who default to monitoring hours worked, login times, or message frequency are measuring activity, not performance. These metrics create surveillance culture, damage trust, and do not correlate with actual contribution.

Solution: Define role-specific outcome metrics before the review cycle begins. What does a strong quarter look like for this role, in terms of deliverables and impact, not hours? OKRs and SMART goals force this definition and make review conversations factual rather than impressionistic.

Running calibration across distributed manager groups

Calibration requires comparing ratings across managers. For distributed teams where managers may never be in the same room, this session needs to be deliberately structured, run virtually with a clear agenda, and informed by pre-computed data rather than live memory.

Solution: Use a performance management platform with built-in calibration tools that surface rating distribution outliers before the session begins. With TrAI in PerformSpark, rating inconsistencies across all managers are identified automatically, the calibration session focuses on resolving flagged outliers rather than building the comparison from scratch.

How Do You Run Effective 1-on-1 Check-Ins With Remote Employees?

The 1-on-1 check-in is the most important performance management tool available to a remote manager. It is where goal progress is discussed, blockers are surfaced, feedback is delivered, and development is addressed, in a scheduled, consistent, documented format that replaces the informal daily interactions that in-office teams have naturally.

The most effective remote 1-on-1 check-ins share four characteristics:

- They happen on a consistent schedule. Biweekly is the right default for most roles. A check-in that managers cancel or reschedule regularly signals that performance conversations are optional, which creates disengagement and trust erosion.

- They use a consistent agenda structure. Goal progress, blockers, development, and near-term priorities. This structure keeps the conversation productive and ensures every check-in covers the information needed for review-time documentation.

- They are documented in the performance management system, not in chat. Notes from check-ins that live in Slack threads or email inboxes are lost. Notes in the performance platform create a searchable record that informs review writing and provides evidence if performance concerns need to be addressed.

- They are two-directional. The employee should have an equal voice in setting the agenda. Remote employees who feel that check-ins are status reports for the manager rather than development conversations for them disengage from the process quickly.

How Do You Calibrate Performance Ratings Across a Distributed Team?

Calibration is more important, not less, for remote teams. When managers work in different locations and have less peer visibility into each other's teams, rating standards diverge more quickly. An uncalibrated remote performance system produces compensation and promotion decisions that reflect which manager someone works for more than what they actually contribute.

A virtual calibration session for a distributed team should follow the same structure as an in-person session:

- Pre-session: HR shares rating distribution data across all managers before the session. Outliers, managers whose distributions differ significantly from their peer group, are flagged in advance so the session can focus on those cases.

- In session: Managers present their flagged ratings with supporting evidence from goals, check-in notes, and feedback records. The group aligns on whether the rating reflects a genuine performance difference or a rating standard inconsistency.

- Post-session: Agreed adjustments are made before ratings are communicated to employees or used in compensation decisions.

TrAI pre-computes rating distribution outliers across all managers before the calibration session begins, eliminating the manual comparison step that makes remote calibration slow and inconsistent.

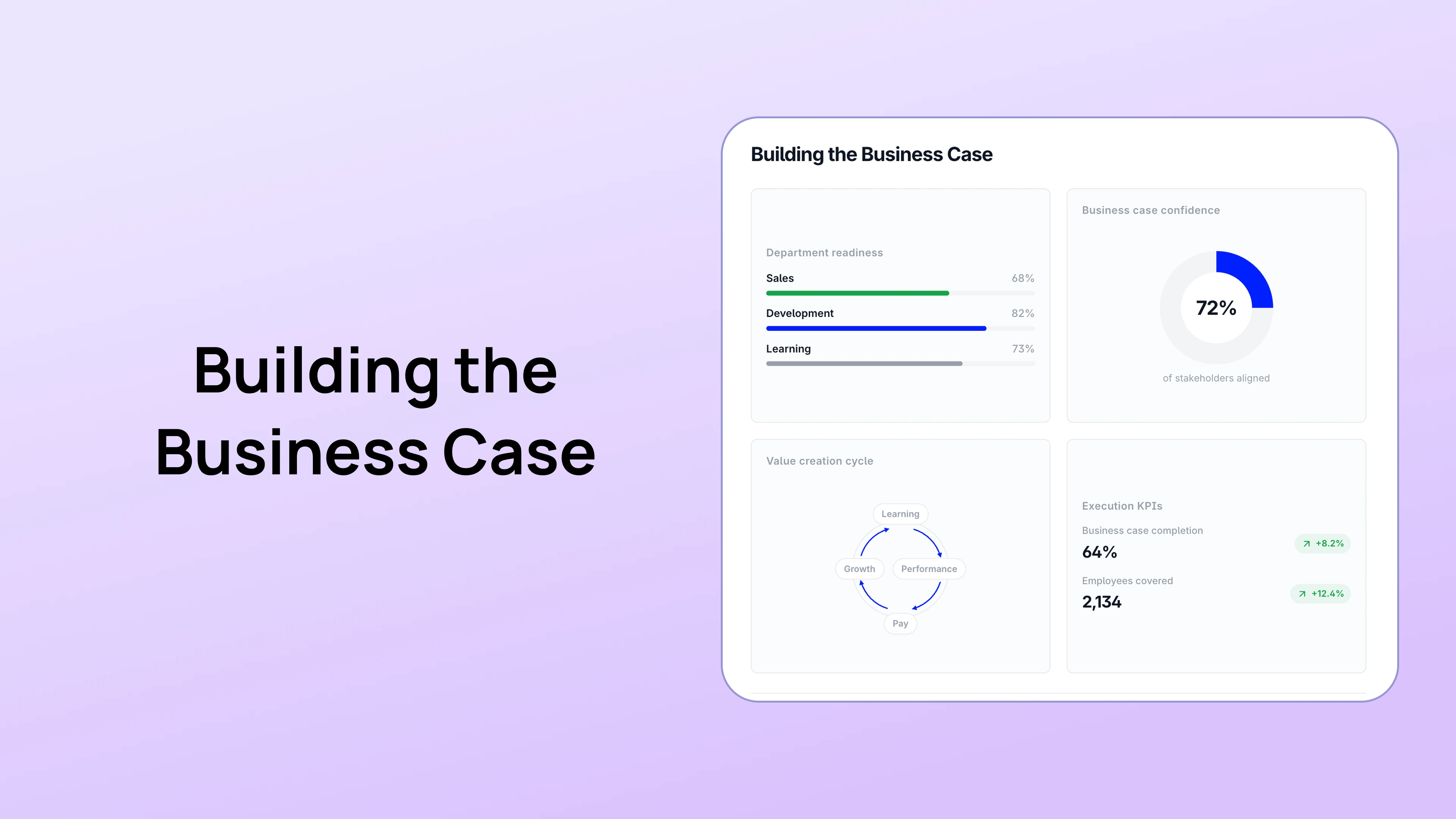

How Do You Choose the Right Tools for Remote Performance Management?

Remote teams need performance management software that works asynchronously, where check-in notes, feedback, and goal updates can be captured outside of a shared meeting, and where the record is accessible to both the manager and employee regardless of time zone.

The specific capabilities that matter most for distributed teams:

- Asynchronous-friendly check-in tools. Templates that both manager and employee can update before or after a conversation, not just during a live call.

- Goal visibility across the full team. Employees and managers should be able to see how individual goals connect to team and company objectives without being in the same room.

- Continuous feedback logging. The ability to record specific feedback close to the event it relates to, not just at review time. This is the primary defense against recency bias in remote performance reviews.

- Built-in calibration. Rating distribution comparison across all managers, pre-computed before the calibration session. For distributed teams, manual calibration is too slow and too error-prone.

- Development plan integration. IDPs that are accessible to both manager and employee and referenced in check-ins, not filed away between annual reviews.

PerformSpark includes all of these capabilities at $6 per user per month with no add-ons required. Every feature, goal management, check-ins, continuous feedback, performance reviews, AI-assisted calibration via TrAI, individual development plans, and PIPs, is built for teams that do not share a physical space.

Performance Management Built for Distributed Teams

PerformSpark gives remote and hybrid teams the full performance cycle goals, async-friendly check-ins, continuous feedback, calibration, IDPs, and TrAI at $6 per user per month. No enterprise contracts. Setup in one to two weeks. Book a Demo

Performance Management for Remote Teams: What Works in 2026

Managing performance in a remote or hybrid team is fundamentally different from managing performance in an office, not because the goals of performance management change, but because the informal infrastructure that supports it disappears.

In an office, a manager observes how employees show up, notices when someone seems disengaged, has spontaneous hallway conversations that surface problems early, and gives real-time feedback close to the event. Remote and distributed teams lose all of this. What replaces it must be explicit, documented, and consistent, built into the rhythm of the team rather than left to chance.

This page covers the specific adjustments that make performance management work across distributed and hybrid teams, the common failure modes, and how to build a system that maintains accountability and fairness regardless of where each team member is located.

Why Does Performance Management Change for Remote Teams?

In-person management relies heavily on proximity as a feedback signal. Managers see who is at their desk, notice behavioral changes, and have informal check-ins that happen naturally in shared spaces. Remote management has none of this. Every performance signal must be deliberately created and captured.

This shift has three specific implications:

- Recency bias is amplified. Without regular documented check-ins, managers in remote teams write performance reviews based on what they can remember, which is almost always the last few weeks. Structured 1-on-1 documentation is the primary defense against this.

- Visibility gaps favor certain working styles. Employees who communicate frequently in Slack or on video tend to be perceived as higher performers regardless of their actual output. Outcome-based performance criteria level this playing field and make ratings more defensible.

- Calibration inconsistency compounds. Remote managers often have less peer visibility than in-office managers. Without calibration sessions, rating standards diverge more quickly across distributed teams than across co-located ones.

What Are the Biggest Remote Performance Management Challenges?

Maintaining consistent feedback loops

In-office feedback happens informally and continuously. Remote feedback must be scheduled and structured. Without a recurring 1-on-1 cadence, feedback gaps of weeks or months develop, and employees in those gaps lose clarity on their performance and development.

Solution: Build a biweekly 1-on-1 template with consistent talking points: goal progress, blockers, development, and priorities for the next period. Use a performance management platform that logs these conversations so the record exists when review time arrives.

Managing performance across time zones

Asynchronous teams cannot rely on real-time feedback alone. When a manager and employee are eight hours apart, the feedback loop needs to function asynchronously, written feedback in the platform, goal updates in a shared system, and check-in notes that do not require simultaneous availability.

Solution: Establish clear asynchronous communication protocols: preferred channels, expected response times, and what constitutes a formal check-in record versus a casual message. Document performance conversations in the system, not in chat tools that do not preserve a searchable record.

Measuring output rather than presence

Remote managers who default to monitoring hours worked, login times, or message frequency are measuring activity, not performance. These metrics create surveillance culture, damage trust, and do not correlate with actual contribution.

Solution: Define role-specific outcome metrics before the review cycle begins. What does a strong quarter look like for this role, in terms of deliverables and impact, not hours? OKRs and SMART goals force this definition and make review conversations factual rather than impressionistic.

Running calibration across distributed manager groups

Calibration requires comparing ratings across managers. For distributed teams where managers may never be in the same room, this session needs to be deliberately structured, run virtually with a clear agenda, and informed by pre-computed data rather than live memory.

Solution: Use a performance management platform with built-in calibration tools that surface rating distribution outliers before the session begins. With TrAI in PerformSpark, rating inconsistencies across all managers are identified automatically, the calibration session focuses on resolving flagged outliers rather than building the comparison from scratch.

How Do You Run Effective 1-on-1 Check-Ins With Remote Employees?

The 1-on-1 check-in is the most important performance management tool available to a remote manager. It is where goal progress is discussed, blockers are surfaced, feedback is delivered, and development is addressed, in a scheduled, consistent, documented format that replaces the informal daily interactions that in-office teams have naturally.

The most effective remote 1-on-1 check-ins share four characteristics:

- They happen on a consistent schedule. Biweekly is the right default for most roles. A check-in that managers cancel or reschedule regularly signals that performance conversations are optional, which creates disengagement and trust erosion.

- They use a consistent agenda structure. Goal progress, blockers, development, and near-term priorities. This structure keeps the conversation productive and ensures every check-in covers the information needed for review-time documentation.

- They are documented in the performance management system, not in chat. Notes from check-ins that live in Slack threads or email inboxes are lost. Notes in the performance platform create a searchable record that informs review writing and provides evidence if performance concerns need to be addressed.

- They are two-directional. The employee should have an equal voice in setting the agenda. Remote employees who feel that check-ins are status reports for the manager rather than development conversations for them disengage from the process quickly.

How Do You Calibrate Performance Ratings Across a Distributed Team?

Calibration is more important, not less, for remote teams. When managers work in different locations and have less peer visibility into each other's teams, rating standards diverge more quickly. An uncalibrated remote performance system produces compensation and promotion decisions that reflect which manager someone works for more than what they actually contribute.

A virtual calibration session for a distributed team should follow the same structure as an in-person session:

- Pre-session: HR shares rating distribution data across all managers before the session. Outliers, managers whose distributions differ significantly from their peer group, are flagged in advance so the session can focus on those cases.

- In session: Managers present their flagged ratings with supporting evidence from goals, check-in notes, and feedback records. The group aligns on whether the rating reflects a genuine performance difference or a rating standard inconsistency.

- Post-session: Agreed adjustments are made before ratings are communicated to employees or used in compensation decisions.

TrAI pre-computes rating distribution outliers across all managers before the calibration session begins, eliminating the manual comparison step that makes remote calibration slow and inconsistent.

How Do You Choose the Right Tools for Remote Performance Management?

Remote teams need performance management software that works asynchronously, where check-in notes, feedback, and goal updates can be captured outside of a shared meeting, and where the record is accessible to both the manager and employee regardless of time zone.

The specific capabilities that matter most for distributed teams:

- Asynchronous-friendly check-in tools. Templates that both manager and employee can update before or after a conversation, not just during a live call.

- Goal visibility across the full team. Employees and managers should be able to see how individual goals connect to team and company objectives without being in the same room.

- Continuous feedback logging. The ability to record specific feedback close to the event it relates to, not just at review time. This is the primary defense against recency bias in remote performance reviews.

- Built-in calibration. Rating distribution comparison across all managers, pre-computed before the calibration session. For distributed teams, manual calibration is too slow and too error-prone.

- Development plan integration. IDPs that are accessible to both manager and employee and referenced in check-ins, not filed away between annual reviews.

PerformSpark includes all of these capabilities at $6 per user per month with no add-ons required. Every feature, goal management, check-ins, continuous feedback, performance reviews, AI-assisted calibration via TrAI, individual development plans, and PIPs, is built for teams that do not share a physical space.

Performance Management Built for Distributed Teams

PerformSpark gives remote and hybrid teams the full performance cycle goals, async-friendly check-ins, continuous feedback, calibration, IDPs, and TrAI at $6 per user per month. No enterprise contracts. Setup in one to two weeks. Book a Demo

Frequently Asked Questions

How do you measure employee performance on a remote team?

Measure outcomes, not activity. Define role-specific deliverables and OKRs at the start of each review period. Assess performance against these documented expectations rather than against presence signals like hours worked or message frequency. Documented check-in notes and feedback records supplement goal progress to give a complete picture of performance during a review cycle.

How often should you check in with remote employees about performance?

Biweekly structured 1-on-1s are the right default for most remote roles. The key is consistency, a check-in that happens every two weeks reliably is more valuable than a weekly check-in that gets cancelled regularly. For new remote employees, new managers, or employees on performance improvement plans, more frequent check-ins are appropriate.

How do you run a performance review for a remote employee?

The same way you run any review, with specific behavioral evidence rather than general impressions. The difference for remote employees is that the evidence must come from documented check-in notes and feedback records rather than from memory or observation. Reviews written from documentation are more accurate, more complete, and more defensible than reviews written from memory of a remote working relationship.

How do you handle underperformance on a remote team?

Address it early, directly, and with specific documented examples. The absence of proximity makes it easier to avoid difficult conversations on remote teams, managers who only see an employee on a biweekly video call have fewer natural opportunities to deliver developmental feedback. Structured check-ins with a consistent agenda prevent this avoidance by creating a regular forum for honest performance conversations.

Is calibration necessary for remote and hybrid teams?

Yes, and it is more critical for remote teams than for co-located ones. When managers work in different locations and have less peer visibility, rating standards diverge faster. Uncalibrated remote performance systems produce inequitable compensation and promotion outcomes. Even a single virtual calibration session per review cycle, using pre-computed rating distribution data, significantly reduces this risk.

What is the best performance management software for remote teams?

The best platform for a remote team is one that works asynchronously, where check-ins, feedback, and goal tracking function without requiring both parties online simultaneously. Key criteria: consistent check-in templates, continuous feedback logging, built-in calibration, development plan integration, and AI tools focused on decision quality rather than just writing assistance. PerformSpark is designed specifically for teams between 50 and 1,000 employees and includes all of these capabilities at a flat per-user rate.

.webp)