Table of Content

TrAI vs. ChatGPT: Why Generic AI Can't Replace Specialized HR Intelligence

Every manager in your company has discovered the same secret. Writing performance reviews is tedious. Asking ChatGPT to write them takes five seconds.

Right now, your managers are copying and pasting bullet points, slack messages, and private feedback about your employees into a public generative AI prompt box. They ask the AI to "make this sound professional." The AI complies, generating a beautifully written, completely generic block of text.

The manager hits submit. The HR team praises the well-written review.

This is a massive organizational failure.

Using generic AI to manage human performance introduces catastrophic data privacy risks, destroys the authenticity of managerial feedback, and exposes your company to severe legal liability regarding HR AI bias.

In the PerformSpark Strategy, we believe AI is the most powerful tool in the modern HR arsenal, but only if it is architected specifically for talent management.

This guide breaks down the critical differences between generic large language models and specialized HR intelligence. It explains why you must lock down your performance data and how TrAI protects your company while still saving your managers hours of administrative work.

The Three Dangers of the "Copy and Paste" AI Review

When a manager uses a generic tool like ChatGPT, Claude, or Gemini to write an employee review, they trigger three distinct enterprise risks.

The Data Privacy Nightmare

Public AI models train on the data users feed into them. If a manager types, "Write a performance improvement plan for Sarah Jenkins who is failing her Q3 sales quota of $500k," that sensitive corporate data is now on external servers.

For companies operating under GDPR, CCPA, or strict enterprise security protocols, this is a severe data breach. You have just leaked salary targets, employee identities, and internal performance metrics to a third-party system without employee consent. Data privacy in HR tech is not a suggestion. It is a legal mandate.

The AI Hallucination Risk

Generative AI models are designed to predict the next logical word. They are not designed to tell the truth. This phenomenon is called hallucination.

If a manager provides sparse bullet points, a generic AI will invent context to make the paragraph sound complete. It might invent a project the employee never worked on or praise askill the employee does not possess. When the employee reads a review containing fabricated achievements, they instantly realize their manager did not write it. Trust is permanently destroyed.

The Sterilization of Feedback

Good feedback requires nuance, empathy, and organizational context. Generic AI removes all three. It defaults to a sterilized, corporate tone that sounds exactly like a robot.

As we discussed in our guide on Giving Remote Feedback , psychological safety requires authenticity. You cannot build a high-performance culture if your employees feel they are being managed by a chatbot.

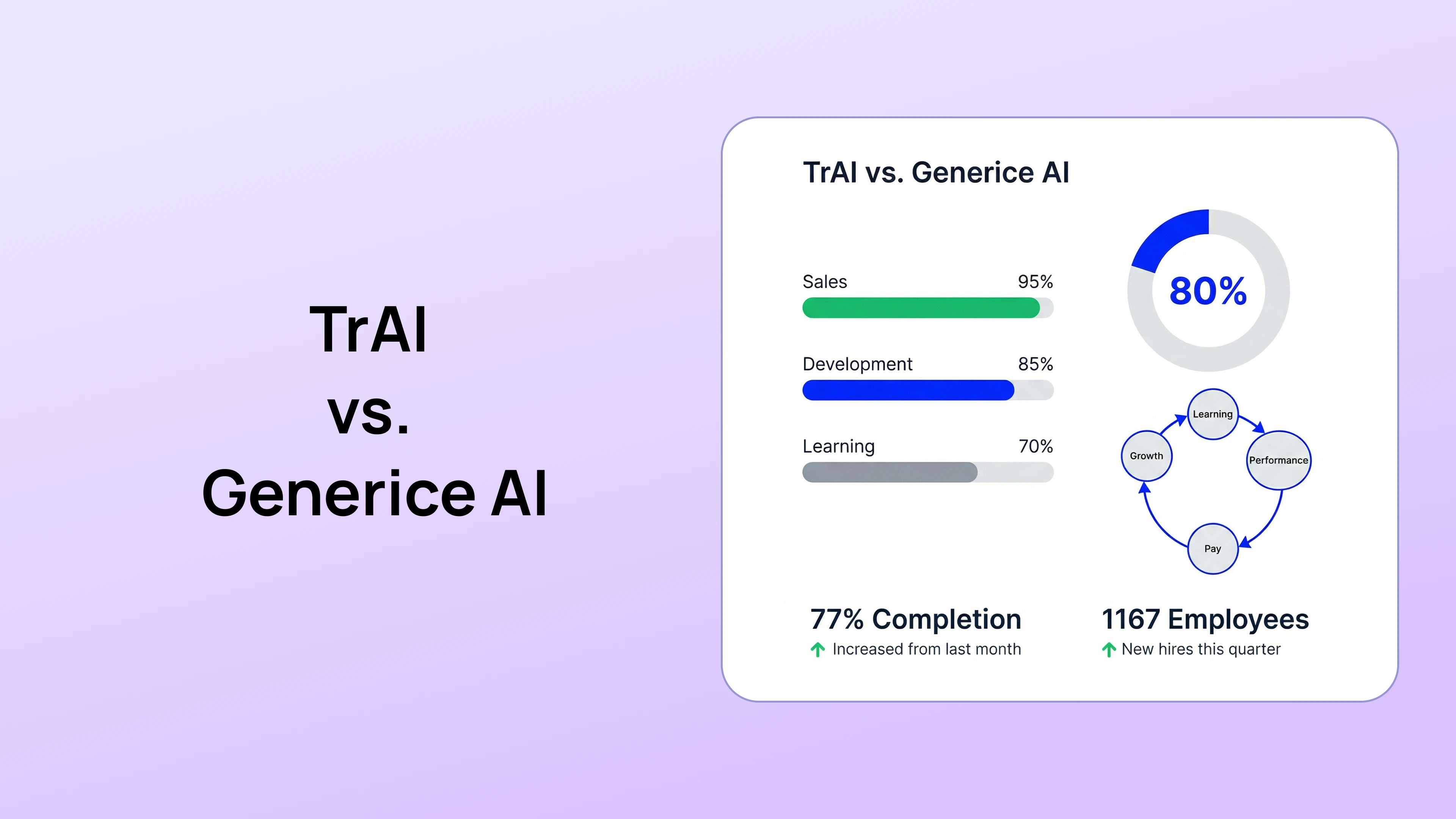

Generic LLM vs. Specialized HR Intelligence

To understand the solution, you must understand the architectural difference between a generic Large Language Model (LLM) and a specialized HR engine.

Use this framework to evaluate any AI tool your HR team is considering.

How TrAI is Architected for Performance Management

We built TrAI to solve the exact problems that generic AI creates. It is not an "AI Wrapper" sitting on top of a public model. It is a highly specialized engine trained exclusively on performance management frameworks, coaching methodologies, and organizational psychology.

Contextual Data Integration (No More Blank Pages)

TrAI does not need a manager to copy and paste data. It is already connected to the systems where work happens.

When a manager sits down to write a quarterly review, TrAI aggregates the data automatically. It pulls the employee's progress from the Goals Management Software, gathers peer feedback submitted over the last 90 days, and analyzes the weekly check-in notes.

TrAI presents the manager with an objective summary of facts. The manager does not ask TrAI to invent a review. The manager uses TrAI to synthesize reality.

The Real-Time Bias Detection Engine

Generic AI often amplifies human bias. If a manager writes feedback using aggressive language for a female employee but assertive language for a male employee, generic AI will simply rewrite it to sound more polished while maintaining the underlying bias.

TrAI acts as a legal and cultural guardrail. Before a manager submits a review, the engine scans the text for subjective language, personality critiques, or unconscious bias.

- Manager Draft: "John lacks the aggressive energy needed to close enterprise deals."

- TrAI Alert: "This feedback focuses on personality traits rather than observable behaviors. Consider revising to focus on specific sales activities or outcome metrics. Example: 'John missed the Q3 outbound call volume target by 20%.'"

We covered the deep mechanics of this in our Month 1 release: How TrAI Removes Bias from Performance Reviews.

The Nudge Engine (Proactive vs. Reactive AI)

Generic AI waits for you to ask it a question. Specialized HR AI tells you what you need to know before you ask.

Our Nudge Engine monitors employee data patterns continuously. If it notices that a top performer has not received any positive feedback in 45 days, it sends a private, automated nudge to the manager. It prompts the human to do the human work of connection.

The Legal Imperative for CISOs and HR Directors

We are entering an era of AI regulation. Laws governing the use of artificial intelligence in employment decisions are being drafted globally.

If your managers are using unsanctioned, generic AI tools to influence promotions, compensation, or terminations, your company cannot audit the decision-making process. If an employee challenges a termination, you cannot legally defend a review generated by a public LLM.

You must provide a secure, sanctioned alternative. Banning ChatGPT does not work. Managers will just use it on their personal phones. You have to give them a tool that is faster, smarter, and specifically designed for their workflow.

Conclusion

AI is a tool for synthesis, not a substitute for leadership.

The goal of AI in HR is not to replace the manager. The goal is to remove the administrative friction so the manager has more time to actually manage.

When you rely on generic AI, you trade authenticity and security for a few minutes of saved time. When you deploy a specialized engine like TrAI, you give your leaders a secure, bias-free coaching assistant that protects your data and elevates your culture.

Stop letting public algorithms write your performance reviews.

Book a Consultative Demo and discover how PerformSpark provides enterprise-grade AI intelligence built specifically for human resources.

TrAI vs. ChatGPT: Why Generic AI Can't Replace Specialized HR Intelligence

Every manager in your company has discovered the same secret. Writing performance reviews is tedious. Asking ChatGPT to write them takes five seconds.

Right now, your managers are copying and pasting bullet points, slack messages, and private feedback about your employees into a public generative AI prompt box. They ask the AI to "make this sound professional." The AI complies, generating a beautifully written, completely generic block of text.

The manager hits submit. The HR team praises the well-written review.

This is a massive organizational failure.

Using generic AI to manage human performance introduces catastrophic data privacy risks, destroys the authenticity of managerial feedback, and exposes your company to severe legal liability regarding HR AI bias.

In the PerformSpark Strategy, we believe AI is the most powerful tool in the modern HR arsenal, but only if it is architected specifically for talent management.

This guide breaks down the critical differences between generic large language models and specialized HR intelligence. It explains why you must lock down your performance data and how TrAI protects your company while still saving your managers hours of administrative work.

The Three Dangers of the "Copy and Paste" AI Review

When a manager uses a generic tool like ChatGPT, Claude, or Gemini to write an employee review, they trigger three distinct enterprise risks.

The Data Privacy Nightmare

Public AI models train on the data users feed into them. If a manager types, "Write a performance improvement plan for Sarah Jenkins who is failing her Q3 sales quota of $500k," that sensitive corporate data is now on external servers.

For companies operating under GDPR, CCPA, or strict enterprise security protocols, this is a severe data breach. You have just leaked salary targets, employee identities, and internal performance metrics to a third-party system without employee consent. Data privacy in HR tech is not a suggestion. It is a legal mandate.

The AI Hallucination Risk

Generative AI models are designed to predict the next logical word. They are not designed to tell the truth. This phenomenon is called hallucination.

If a manager provides sparse bullet points, a generic AI will invent context to make the paragraph sound complete. It might invent a project the employee never worked on or praise askill the employee does not possess. When the employee reads a review containing fabricated achievements, they instantly realize their manager did not write it. Trust is permanently destroyed.

The Sterilization of Feedback

Good feedback requires nuance, empathy, and organizational context. Generic AI removes all three. It defaults to a sterilized, corporate tone that sounds exactly like a robot.

As we discussed in our guide on Giving Remote Feedback , psychological safety requires authenticity. You cannot build a high-performance culture if your employees feel they are being managed by a chatbot.

Generic LLM vs. Specialized HR Intelligence

To understand the solution, you must understand the architectural difference between a generic Large Language Model (LLM) and a specialized HR engine.

Use this framework to evaluate any AI tool your HR team is considering.

How TrAI is Architected for Performance Management

We built TrAI to solve the exact problems that generic AI creates. It is not an "AI Wrapper" sitting on top of a public model. It is a highly specialized engine trained exclusively on performance management frameworks, coaching methodologies, and organizational psychology.

Contextual Data Integration (No More Blank Pages)

TrAI does not need a manager to copy and paste data. It is already connected to the systems where work happens.

When a manager sits down to write a quarterly review, TrAI aggregates the data automatically. It pulls the employee's progress from the Goals Management Software, gathers peer feedback submitted over the last 90 days, and analyzes the weekly check-in notes.

TrAI presents the manager with an objective summary of facts. The manager does not ask TrAI to invent a review. The manager uses TrAI to synthesize reality.

The Real-Time Bias Detection Engine

Generic AI often amplifies human bias. If a manager writes feedback using aggressive language for a female employee but assertive language for a male employee, generic AI will simply rewrite it to sound more polished while maintaining the underlying bias.

TrAI acts as a legal and cultural guardrail. Before a manager submits a review, the engine scans the text for subjective language, personality critiques, or unconscious bias.

- Manager Draft: "John lacks the aggressive energy needed to close enterprise deals."

- TrAI Alert: "This feedback focuses on personality traits rather than observable behaviors. Consider revising to focus on specific sales activities or outcome metrics. Example: 'John missed the Q3 outbound call volume target by 20%.'"

We covered the deep mechanics of this in our Month 1 release: How TrAI Removes Bias from Performance Reviews.

The Nudge Engine (Proactive vs. Reactive AI)

Generic AI waits for you to ask it a question. Specialized HR AI tells you what you need to know before you ask.

Our Nudge Engine monitors employee data patterns continuously. If it notices that a top performer has not received any positive feedback in 45 days, it sends a private, automated nudge to the manager. It prompts the human to do the human work of connection.

The Legal Imperative for CISOs and HR Directors

We are entering an era of AI regulation. Laws governing the use of artificial intelligence in employment decisions are being drafted globally.

If your managers are using unsanctioned, generic AI tools to influence promotions, compensation, or terminations, your company cannot audit the decision-making process. If an employee challenges a termination, you cannot legally defend a review generated by a public LLM.

You must provide a secure, sanctioned alternative. Banning ChatGPT does not work. Managers will just use it on their personal phones. You have to give them a tool that is faster, smarter, and specifically designed for their workflow.

Conclusion

AI is a tool for synthesis, not a substitute for leadership.

The goal of AI in HR is not to replace the manager. The goal is to remove the administrative friction so the manager has more time to actually manage.

When you rely on generic AI, you trade authenticity and security for a few minutes of saved time. When you deploy a specialized engine like TrAI, you give your leaders a secure, bias-free coaching assistant that protects your data and elevates your culture.

Stop letting public algorithms write your performance reviews.

Book a Consultative Demo and discover how PerformSpark provides enterprise-grade AI intelligence built specifically for human resources.

Frequently Asked Questions

What are the main risks of using ChatGPT for performance reviews?

The three primary risks are Data Privacy violations (inputting sensitive employee data into a public model), AI Hallucination (the model inventing false achievements or failures), and Bias Amplification (the model echoing unconscious biases found in its training data).

Can employees tell if AI wrote their performance review?

Yes. Generic AI relies on predictable sentence structures, corporate jargon, and a sterilized tone. Employees quickly recognize when feedback lacks specific, localized context or emotional nuance, which severely damages trust between the employee and the manager.

How is specialized HR AI different from generative AI?

Specialized HR AI is trained on closed, proprietary datasets focused strictly on organizational psychology and performance frameworks. It operates within a secure enterprise environment, does not use your data to train public models, and includes specific guardrails to detect HR compliance issues and unconscious bias.

Will AI replace HR professionals or managers?

No. AI excels at data synthesis, pattern recognition, and administrative drafting. It fails at empathy, complex conflict resolution, and strategic career coaching. AI replaces the paperwork, not the people. It allows managers to spend less time typing and more time coaching.

How does TrAI protect employee data privacy?

TrAI operates within the secure PerformSpark infrastructure. All data is encrypted in transit and at rest. Unlike public LLMs, the inputs provided by your managers and the feedback data generated by your company are never used to train external or public AI models. Your data remains strictly your own.

.webp)

.webp)

.webp)